- Amazon S3 Batch Operations

- Amazon S3 Batch Operations | HN thread

- AWS Cost and Usage Report

- Creating an AWS Cost and Usage Report

- Export Billing Data to BigQuery

- View Your Cost Trends With Billing Reports

- Develop Hundreds of Kubernetes Services at Scale with Airbnb

- Two Key Docker Benefits and How to Attain Them

- Container Training

- Container Training | Github

- Getting Started With Kubernetes and Container Orchestration

Welcome to this weeks Industry News episode. The idea of this weekly episode, is that I will review interesting links that I found throughout the week, so that you can check them out too. Alright, so lets dive in.

Amazon S3 Batch Operations

If you are using AWS S3 then you will probably want to know about the recently release batch operations feature. Typically, you would use S3 to store tons of data, and this means lots and lots of files. So, being able to do things in batch across a bunch of files is a pretty nice feature (vs doing things one-by-one). But, while reading about this on Hacker News, I did come across an interesting comment here, about data migration from S3 to Glacier. When doing things in bulk, make sure you run the numbers, and check what that will cost you. Looks like this person generated an $11k bill just by running a few tests with lots of files.

You can check it out at Amazon S3 Batch Operations along with the HN thread.

Keeping Cloud Costs Under Control

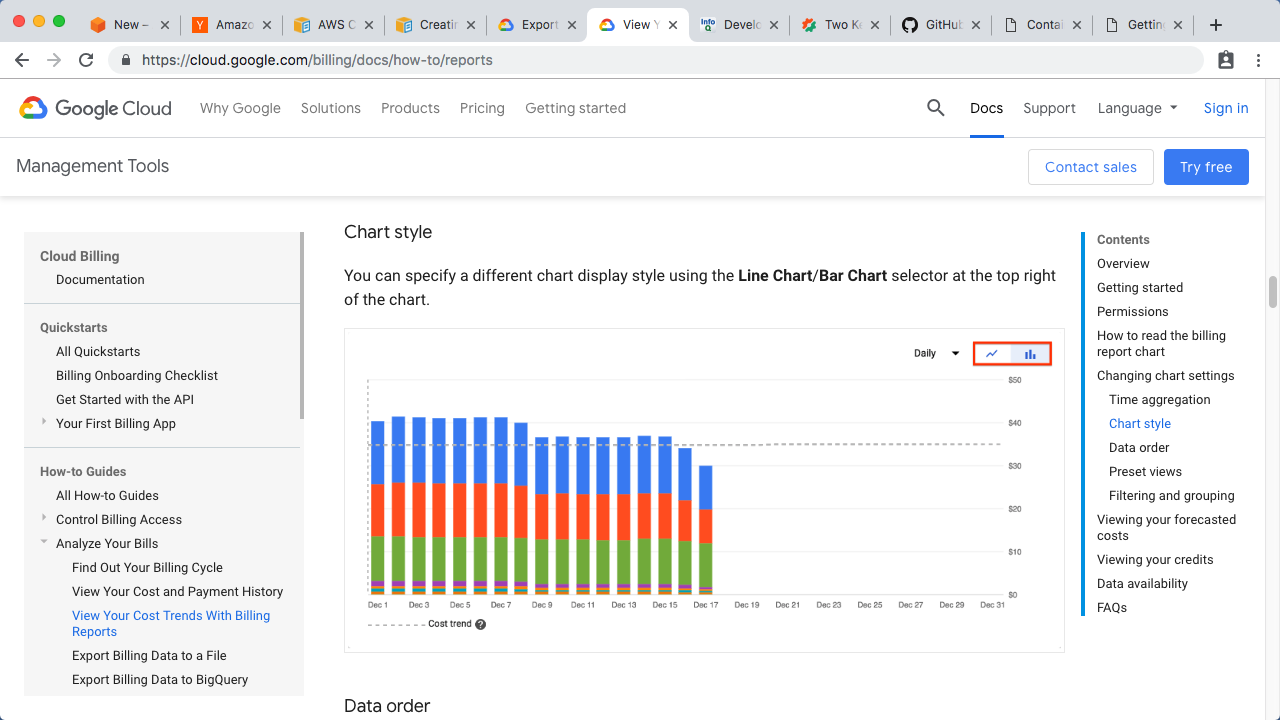

So, this surprise $11k bill got me thinking, and I wanted to share my strategy for keeping costs under control when you are using cloud providers. I have a dashboard that automatically gets updated hourly and is displayed in my office. It looks like this, where you have a rolling bar chart, one bar per day, and each colour here shows what product consumption looked like on that day. You can also see the total cost per day over here. Using something like with will allow you to quickly spot odd cloud consumption. The key here, is to display this in the office on a TV or monitor somewhere, so it is in your face without you needing to dig around for it. This is pretty simple, but could help you save many thousands of dollars, and allows you to quickly jump on things before they go off the rails.

Alright, so how do you actually create something like this? Well, on AWS you can use the Cost and Usage Report feature and this page walks you through creating it. Typically, you would export this data into some type of database like Redshift or QuickSight, then you can just use whatever your normal dashboarding tools are.

On Google Cloud, you can use the Export Billing Data to BigQuery feature too. This does the same thing. The kind of cool thing, about exporting your billing data to a database, is that you can then run all types of custom SQL statements against it when you are trying to debug something. This was so much better than looking at and trying to debug PDF files of your billing data.

This has worked really well for me. I use this in addition to billing budget alerts. The problem I have with billing alerts is they just tell you something weird is happening, then you need to dig into it. If you have something like this bar chart here, then you should be able to quickly spot when things shoot up, or when one product all the sudden consumes tons of resources. So, this chart not only tells you when things are weird but also a good place to go and look.

Develop Hundreds of Kubernetes Services at Scale with Airbnb

Next up, I wanted to share, this InfoQ page, where there is a pretty good video and transcript of how Airbnb in using Kubernetes. The talk walks through some of the things Airbnb learned along the way. I always like checking out how these bigger companies are using things like Kubernetes, as it will likely tell you some good war stories and lessons learned, so they might save you some time down the road too.

You can check it out at Develop Hundreds of Kubernetes Services at Scale with Airbnb.

Two Key Docker Benefits and How to Attain Them

Finally, I wanted to point out this cool post by Jérôme about a two key benefits of using Docker. I had the opportunity of meeting and working with Jérôme a few times when I was a Docker. I thought this post was pretty good around high-lighting the developer side of things, like when you want to work on your local machine, vs deploying things into production. There is tons of focus on the production side of things but honestly that is only half the story, and I am constantly using Docker on my local machine too.

By the way, Jérôme has an awesome free workshop on containers that he has been working on, and delivering around the world, for a few years. There is tons of material here on the github pages too. He also has a website called Container Training with a bunch of slide decks and video recordings of when he has delivered this in the past. As a quick start, I’d suggest checking out the slide decks, as they are packed with useful information. This one on Getting Started With Kubernetes and Container Orchestration racks in at 411 slide and it typically takes a few hours to work though. Highly recommend checking these out.

Alright, that’s it for this episode. Thanks for watching. Cya next week. Bye.